Emmet - Brixx

In our showcase we demonstrate the power of Artificial Neural Networks through the specialized recognition and classification of interlocking toy bricks. This project serves as a window into the wide range of possibilities offered by current AI technology, and illustrates just how deeply and precisely machines can “see” today.

Practical Application

This serves as a perfect example to demonstrate the capabilities of AI in the detecting subtle differences and details. In addition, a successful classification could be of great use in practical applications, such as automating sorting and storage processes or supporting assembly instructions.

System Requirements

The system must be able to accurately identify clamp devices in photographs, regardless of their positioning, orientation or lighting conditions.

Classification of the detected blocks

After recognition, the building blocks are to be classified according to their specific shape and size. The system must be able to differentiate different types of building blocks.

Color determination

In addition to classifying the shape of the terminal block, the system must be able to determine the specific color of each detected brick. This includes the ability to detect subtle nuances and gradations.

Linking to building block sets

After classification and color determination, the system should be able to link the identified bricks to the corresponding sets to which they belong. This mapping capability facilitates inventory management and can help users, identify missing or specific pieces in their collections.

Data set

The data basis for our project comes directly from our own carefully created data. With the help of automated procedures we have created a comprehensive dataset of over 650,000 real photos of interlocking toy bricks, covering a total of 600 different classes of bricks. The photos were taken under different conditions and from different perspectives to ensure the broadest possible database. In addition to these real photos, we developed a pipeline that allows us to generate synthetic data sets.

Training

All training steps and data processing are performed in-house. This guarantees that all data remains under our control and is never forwarded to third parties. For the training of our models we rely on our in-house GPU cluster.

Accuracy

96,06%

Accuracy is the ratio of correct predictions to the total number of predictions.

Loss

0,15

The loss value, also known as the cost function, indicates how far the model’s predictions are from the actual results.

Recall

97,61%

Recall misst, wie viele der tatsächlich positiven Elemente vom Modell auch als solche erkannt wurden.

Precision

95,56%

Precision indicates the ratio of correctly positively predicted elements to the total number of elements predicted as positive.

F1-Score

96,07%

This is the harmonic mean of Precision and Recall and gives a comprehensive value about the quality of the model.

Accuracy

96,06%

Accuracy is the ratio of correct predictions to the total number of predictions.

Loss

0,15

The loss value, also known as the cost function, indicates how far the model’s predictions are from the actual results.

Recall

97,61%

Recall misst, wie viele der tatsächlich positiven Elemente vom Modell auch als solche erkannt wurden.

Precision

95,56%

Precision indicates the ratio of correctly positively predicted elements to the total number of elements predicted as positive.

F1-Score

96,07%

This is the harmonic mean of Precision and Recall and gives a comprehensive value about the quality of the model.

Optimization

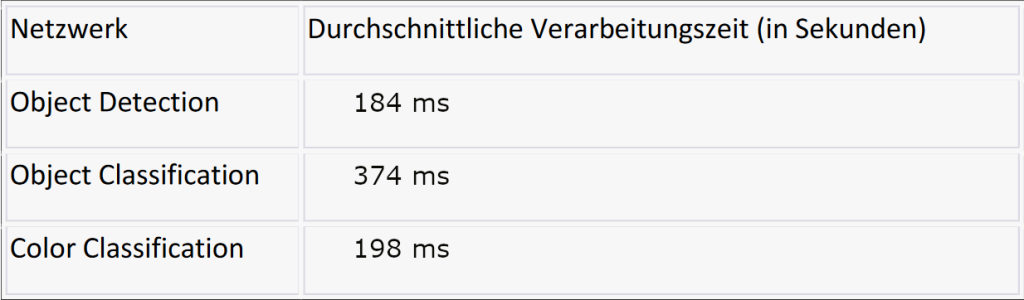

Through targeted adjustments and optimizations in our system architecture as well as the algorithms used, we were able to achieve considerable improvements in the processing speed. Originally, our server-side processing required a total duration of 30,000 milliseconds (30 seconds) per image. With the improvements we were able to drastically reduce this time to only 150 milliseconds.

For our tests, we used images that each contained 50 interlocking toy bricks.

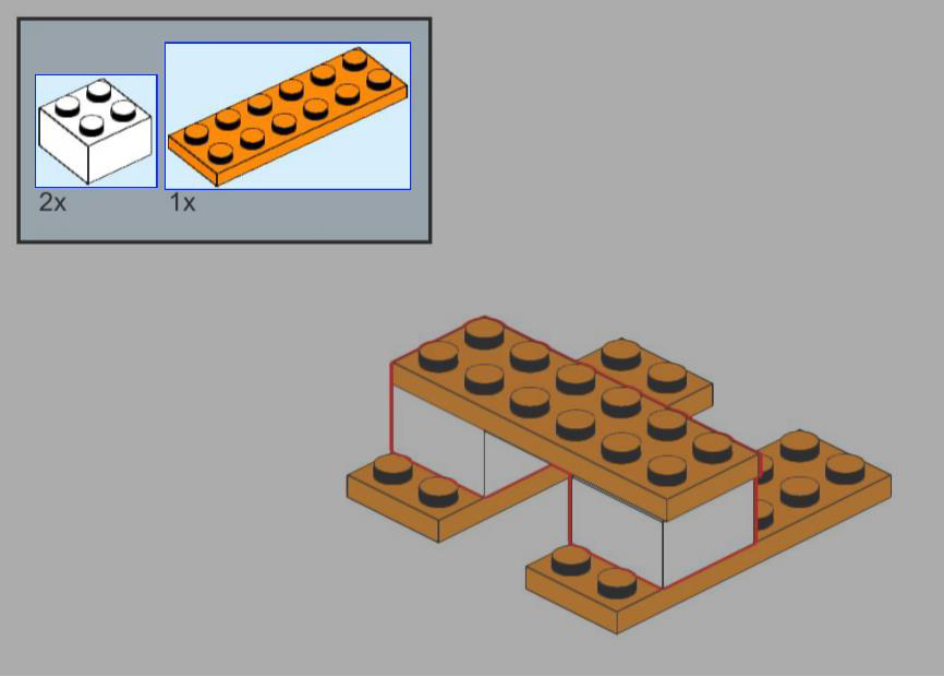

Integration of building instructions

During the course of our project, we made a significant advancement: the Integration of the processing of building instructions. This allows us not only to extract valuable additional information, but also to assign each recognized

brick to a specific page and its position on that page.

Future work

One of our primary goals for further development is to optimize the processing speed of our classification network. In its current state, our system requires 47 ms to process an image, which corresponds to a rate of 21 images per second. To enable seamless real-time interaction, we are aiming for a performance improvement to achieve a processing rate of 60 frames per second. Such a level of speed would not only significantly improve the user experience, but also open the door to new application areas such as:

- Automated quality control on production lines.

- Real-time monitoring and optimization of production processes.

- Rapid detection and sorting of components in assembly lines.

Our ambitious plans rely primarily on our deep expertise in AI. We intend to commercialize this know-how by releasing an app that will be available for all popular mobile operating systems, also developed in-house. In this way we ensure not only the quality of the application, but also a high standard of data privacy.

EMMET SOFTWARE LABS

Emmet Software Labs GmbH & Co. KG

Hertzstr. 6

32052 Herford

Phone: +49 5221-763 999-10

Email: info@emmet-software-labs.com